Large scale finetune of Illustrious with state of the art techniques and performance.

(tl/dr: Works exactly as it should without flaws you might encounter in other checkpoints.)

Key advantages:

-

Easy and convenient prompting

-

Great aesthetic, anatomy, stability along with versatility

-

Vibrant colors and smooth gradients without trace of burning

-

Full brightness range even with epsilon

-

22k+ artist styles, many general styles, almost any character

An addition to mentioned, comparing with vanilla Illustrious and NoobAI:

-

No more annoying watermarks

-

No characters bleed and related side effects (unwanted outfits, style, composition changes)

-

No spawning of strange creatures, sfx on background or extra pair of breasts (1, 2)

-

Better coherence (1, 2), prompt following, anatomy (significant boost over illustrious, slight or neglectable over noob)

-

Artist styles look exactly as they should (and lots of new added)

-

Better prompt following without ignoring tags and need of (higher weights:1.4)

-

Forget about long scizo-negative

-

Stable style without random fluctuations on different seeds

-

New characters

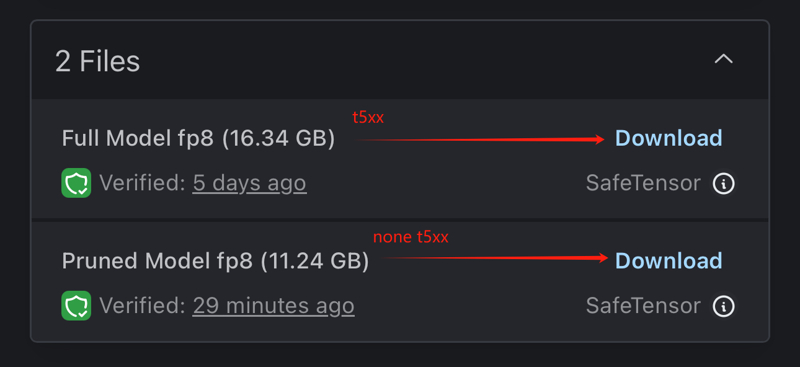

0.6.1 vpred changelog:

-

Vpred have been completely retrained from rouwei-base and now runs flawlessly

-

Just works as expected from proper vpred model, allowing anything from 0,0,0 to 255,255,255 without vae tricks or losing coherence

-

No oversaturation or burning (unless you ask lol)

-

Significant boost of stability and small details (probably peak performance of 4ch vae)

-

Now the model doesn\’t rely on brightness meta tags to change it, but they are still work fine allowing you to have the best control among all checkpoints

-

All features of rouwei are in place

-

Few extra popular ai-artists style/meta styles (thanks Bakariso)

Vpred requires a1111 dev or reforge or comfy. As mentioned in NAI3 paper, use lower CFG 3..5. No need of rescale, CFG++ samplers works fine.

0.6.0 Epsilon is still a good choice btw.

Large well balanced dataset of 4.5M pictures (0.8M with natural text captions) picked from over 12M of different arts, significantly reworked TE and parts of UNET, innovative training approaches. All this in combination with great base model (despite variety of problems illustrious is currently the best base for anime) made it possible to create a checkpoint that would meet modern demands and show unique results.

Dataset cut-off – September 2024.

Features and prompting:

It works good both with short-simple and long-complex prompts. However, if there are contradictory or weird tags and concepts – they won\’t be ignored affecting the output. No guide-rails, no safeguards, no lobotomy, consider pruning scizo-prompts.

Dataset contains only booru-style tags and (simplified) natural text expressions. Despite having a share of furries, all captions have been converted to classic booru style to avoid a number of problems that may arise when mixing different systems. So e621 tags won\’t be understanded properly.

Basic:

~1 megapixel for txt2img, any AR with resolution multiple of 64 (1024×1024, 1152x, 1216×832,…). Euler_a, CFG: For vpred version, 3..5, for epsilon version 4..9 (5-7 is best), 20..28steps. Sigmas multiply may improve results a bit, LCM/PCM and exotic samplers untested. Highresfix – x1.5 latent + denoise 0.6 or any gan + denoise 0.3..0.55.

For vpred version lower CFG 3..5 is needed!

Quality classification:

Only 4 quality tags:

masterpiece, best qualitymasterpiece, best qualitymasterpiece, best quality

low quality, worst qualitylow quality, worst qualitylow quality, worst quality

Nothing else. Meta tags like lowres have been removed, do not use them. Low resolution images have been either removed or upscaled and cleaned with DAT depending on their importance.

Negative prompt:

worst quality, low quality, watermarkworst quality, low quality, watermarkworst quality, low quality, watermark

That\’s all, no need of \”rusty trombone\”, \”farting on prey\” and others. Do not put tags like greyscale, monochrome in negative unless you understand what are you doing. It will lead to burning and over-saturation, colors are fine out of box.

Artist styles:

Grids with examples, list (also can be found in \”training data\”).

Used with \”by \” it\’s mandatory. It will not work properly without it.

\”by \” is used as meta-token for styles to avoid mixing/misinterpret with tags/characters of similar or close name. This allows to have a better results for styles and at the same time avoid random style fluctuation that you may observe in some other checkpoints.

Multiple give very interesting results, can be controlled with prompt weights.

General styles:

2.5d, anime screencap, bold line, sketch, cgi, digital painting, flat colors, smooth shading, minimalistic, ink style, oil style, pastel style2.5d, anime screencap, bold line, sketch, cgi, digital painting, flat colors, smooth shading, minimalistic, ink style, oil style, pastel style2.5d, anime screencap, bold line, sketch, cgi, digital painting, flat colors, smooth shading, minimalistic, ink style, oil style, pastel style

Booru tags styles:

1950s (style), 1960s (style), 1970s (style), 1980s (style), 1990s (style), 1990s (style), animification, art nouveau, pinup (style), toon (style), western comics (style), nihonga, shikishi, minimalism, fine art parody1950s (style), 1960s (style), 1970s (style), 1980s (style), 1990s (style), 1990s (style), animification, art nouveau, pinup (style), toon (style), western comics (style), nihonga, shikishi, minimalism, fine art parody1950s (style), 1960s (style), 1970s (style), 1980s (style), 1990s (style), 1990s (style), animification, art nouveau, pinup (style), toon (style), western comics (style), nihonga, shikishi, minimalism, fine art parody

and everything from this group.

Can be used in combinations (with artists too), with weights, both in positive and negative prompts.

0.6.1 vpred also has following styles:

by nyalia, by flooxyfloox, by koni, by truck-kun, by 748cm, by galawave, by aruhshura, by kyomu, by youlichu, by alens, by chlenix, by cleandongye, by fltccktl, by merratatustle, by xi410, by youmuanon, by memento moriby nyalia, by flooxyfloox, by koni, by truck-kun, by 748cm, by galawave, by aruhshura, by kyomu, by youlichu, by alens, by chlenix, by cleandongye, by fltccktl, by merratatustle, by xi410, by youmuanon, by memento moriby nyalia, by flooxyfloox, by koni, by truck-kun, by 748cm, by galawave, by aruhshura, by kyomu, by youlichu, by alens, by chlenix, by cleandongye, by fltccktl, by merratatustle, by xi410, by youmuanon, by memento mori

Characters:

Use full name booru tag and proper formatting, like \”karin_(blue_archive)\” -> \”karin \\(blue_archive\\)\”, use skin tags for better reproducing, like \”karin \\(bunny \\(blue_archive\\)\”. Autocomplete extension might be very useful.

Natural text:

Use it in combination with booru tags, works great. Use only natural text after typing styles and quality tags. Use just booru tags and forget about it, it\’s all up to you.

Dataset contains over 800k of pitures with hybrid natural-text captions made by Opus-Vision, GPT-4o and ToriiGate

Lots of Tail/Ears-related concepts:

tail censor, holding own tail, hugging own tail, holding another\'s tail, tail grab, tail raised, tail down, ears down, hand on own ear, tail around own leg, tail around penis, tail through clothes, tail under clothes, lifted by tail, tail biting, tail insertion, tail masturbation, holding with tail, ...tail censor, holding own tail, hugging own tail, holding another\'s tail, tail grab, tail raised, tail down, ears down, hand on own ear, tail around own leg, tail around penis, tail through clothes, tail under clothes, lifted by tail, tail biting, tail insertion, tail masturbation, holding with tail, ...tail censor, holding own tail, hugging own tail, holding another\'s tail, tail grab, tail raised, tail down, ears down, hand on own ear, tail around own leg, tail around penis, tail through clothes, tail under clothes, lifted by tail, tail biting, tail insertion, tail masturbation, holding with tail, ...

(booru meaning, not e621) and many others with natural text. The majority works perfectly, some requires rolling.

Brightness/colors/contrast:

You can use extra meta tags to control it:

low brightness, high brightness, low gamma, high gamma, sharp colors, soft colors, hdr, sdr, limited rangelow brightness, high brightness, low gamma, high gamma, sharp colors, soft colors, hdr, sdr, limited rangelow brightness, high brightness, low gamma, high gamma, sharp colors, soft colors, hdr, sdr, limited range

They work both in epsilon and vpred version and works really good.

Unfortunately here is an issue – the model relies on them too much. Without low brightness or low gamma or limited range (in negative) it might be difficult to achieve true 0,0,0 black, the same often true for white.

Both epsilon and vpred versions have like true zsnr, full range of colors and brightness without common flaws observed. But they behaves differently, just try it.

Vpred version

After 0.6.1 update it works much better and same issues as before are not observed. It might react on prompt differently and clip chunks composition here have a bigger influence, but same observed for all vpred checkpoints so far.

At the moment of release this is probably the only vpred model that runs okay and doesn\’t suffer from burned colors, limited range, need of extra tweaks, rescales, adjustments and so on (default parameters: 1, 2, cfg rescale: 1, 2, 3 old examples but still may be relevant). It tends to have same like NAI3 behaviour with wrong skin colors and large fillups with red/yellow/blue if you boost CFG too much. Full experience lmao.

To launch vpred version you will need dev build of A1111, comfy (with special loader node) or Reforge. Just use same parameters (Euler a, cfg 3..5, 20..28 steps) like epsilon. No need to use Cfg rescale, but you can try it.

Updated version does not rely on brightness tag too much and can make very dark-very bright images based on prompt content. But it\’s still very convenient to use them, especially to fix some possible artist/concept biases or to lazily achieve what you want.

Known issues:

Off course there are:

-

As mentioned, model relies too much on brightness meta tags, so you\’ll have to use them to get full performance (only for epsilon)

-

Inferior in furry-related knowledge compared to NoobAi

-

Some cherry-picked character datasets have prompting issues – Yozora and few cute fox-girls are not consistent

-

A little small details polishing finetune or lora would be nice, it\’s up to community

-

To be discovered

Requests for artists/characters in future models are open. If you find artist/character/concept that perform weak, inaccurate or has strong watermark – please report, will add them explicitly. Follow for a new versions.

JOIN THE DISCORD SERVER

License:

Same as illustrious. Fell free to use in your merges, finetunes, ets. just please leave a link.

How it\’s made

I\’ll consider to make a report or something like it later.

In short, 98% of work is related to dataset preparations. Instead of blindly relying on loss-weighting based on tag frequency from nai paper, a custom guided loss-weighting implementation along with asynchronous collator for balancing have been used. Ztsnr (or close to it) with Epsilon prediction was achieved using noise scheduler augmentation.

Thanks:

First of all I\’d like to acknowledge everyone who supports open source, develops in improves code. Thanks to the authors of illustrious for releasing model, thank to NoobAI team for being pioneers in open finetuning of such a scale, sharing experience, raising and solving issues that previously went unnoticed.

Personal:

Artists wish to remain anonymous for sharing private works; Soviet Cat – GPU sponsoring; Sv1. – llm access, captioning, code; K. – training code; Bakariso – datasets, testing, advices, insides; NeuroSenko – donations, testing, code; T.,[] – datasets, testing, advices; rred, dga, Fi., ello – donations; other fellow brothers that helped. Love you so much

And off course everyone who made feedback and requests, it\’s really valuable.

If I forgot to mention anyone, please notify.

Donations

If you want to support – share my models, leave feedback, make a cute picture with kemonomimi-girl. And of course, support original artists.

AI is my hobby, I\’m spending money on it and not begging for donations. However, it has turned into a large-scale and expensive undertaking. Consider to support to accelerate new training and researches.

(Just keep in mind that I can waste it on alcohol or cosplay girls)

BTC: bc1qwv83ggq8rvv07uk6dv4njs0j3yygj3aax4wg6c

ETH/USDT(e): 0x04C8a749F49aE8a56CB84cF0C99CD9E92eDB17db

if you can offer gpu-time (a100+) – PM.

龙跃AI 助手

龙跃AI 助手

暂无评论内容